티스토리 뷰

Showing off studying ML/ML - academic reels

[Reels] LCM-Lookahead for Encoder-based Text-to-Image Personalization

BayesianBacteria 2024. 10. 15. 21:55Summary

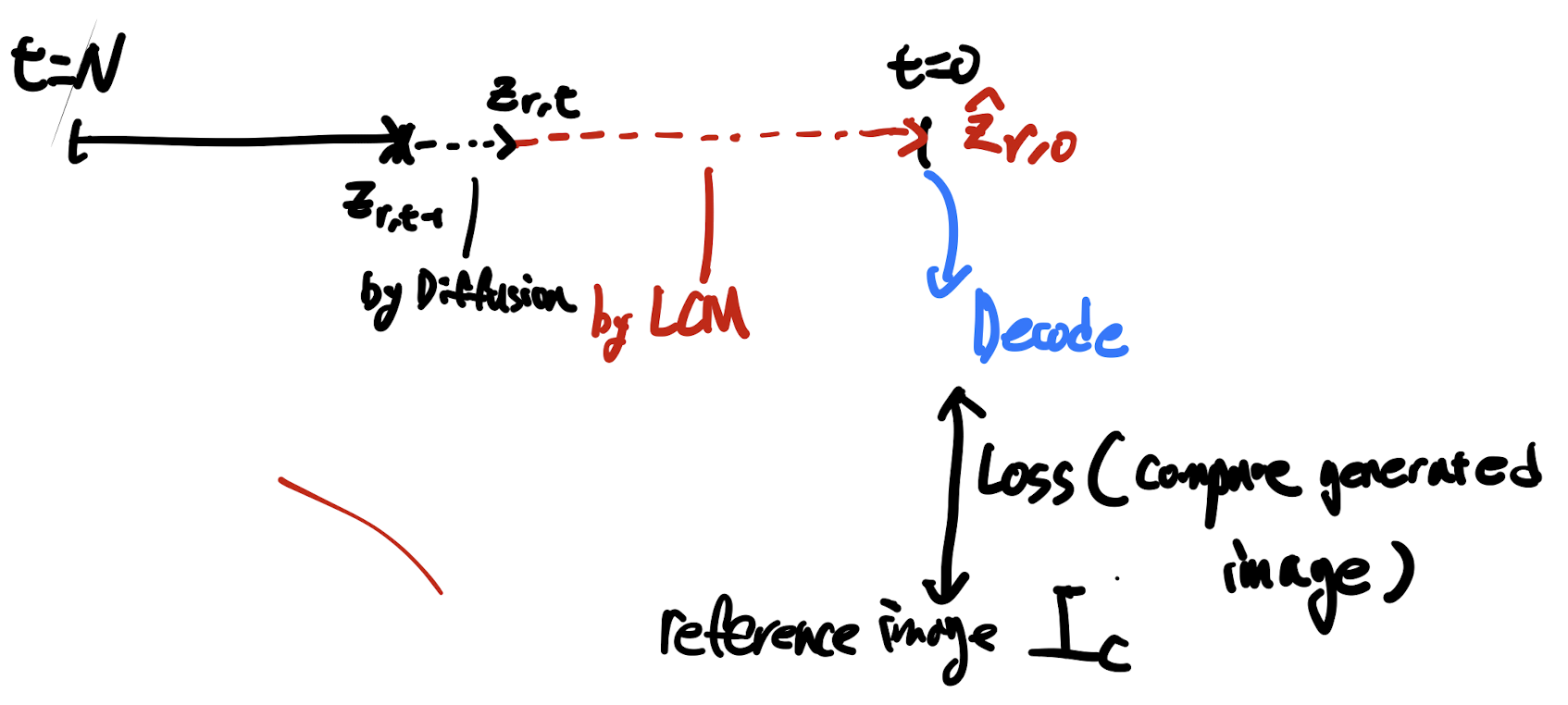

- ("Lookahead-loss") During encoder training for models like IP-adaptor, they use single-pass generation models such as the Consistency model to generate noisy images, which are then compared with the reference image to compute an additional loss (LCM-lookahead loss).

- However, the LCM model can end up optimizing the loss regardless of the input (z_rt below), which could break the alignment between the model and its intended task. To prevent this and regularize the LCM's generation capability, they randomly adjust the LoRA scale within a specific range.

- (Additional reference image encoding through KV value) The Key-Value (KV) pairs of the noisy reference image are computed (using a duplicated Denoising network) and concatenated with the KV pairs of the target image (the main image we’re generating), as shown on the left side of figure 3.

- The KV Encoder consists of parameters copied from the original UNet.

- If the KV Encoder is completely frozen, it can cause “excessive appearance transfer” or a “loss of editing capability,” so it's made trainable using LoRA (a bit unclear here).

- (Synthetic data generation for personalization) Inspired by the mode-collapse issue in SDXL-turbo (e.g., similar prompts generating very similar images—leading to repeated faces, etc.), we address this by generating multiple images of the same identity, using around 500k images for this purpose.

Key Insight

- Single-pass models (consistency models, progressive distillation, or etc) distilled from the original model can be utilized to apply “image-space loss”.

- the distilled model and original model are “aligned”: they can generate similar output with identitical prompt and initial noise.

- Here, the target task was the personalization but I believe it is capable of solving other task which needs the image space loss (for instance, aesthetic score)

- Key-value of the noisy-reference image include the feature of the target identity.

- Diffusion models with discriminator (e.g. SDXL) may lead to mode-collapse and it can be useful to generate synthetic personalization data (having consistent identity).

'Showing off studying ML > ML - academic reels' 카테고리의 다른 글

| [Reels] Imagen yourself (0) | 2024.10.15 |

|---|---|

| [Reels] Battle of the Backbones: A large-Scale Comparison of pre-trained models across computer vision tasks (1) | 2024.08.20 |

| [Reels] HyDE (Hypothetical Document Embedding) (0) | 2024.05.02 |

| [Reels] The simple theoretical background of Domain generalization (0) | 2024.04.30 |

공지사항

최근에 올라온 글

최근에 달린 댓글

- Total

- Today

- Yesterday

링크

TAG

- Transformer

- vscode

- deeplearning4science

- icml2024

- LLM

- 프렌밀리

- generativemodeling

- loss-weighting

- ICML

- domaingeneralization

- multiheadattention

- DeepLearning

- flowmatching

- Theme

- diffusion

- MachineLearning

- finetuning

- 이문설농탕

- 몽중식

| 일 | 월 | 화 | 수 | 목 | 금 | 토 |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | |||

| 5 | 6 | 7 | 8 | 9 | 10 | 11 |

| 12 | 13 | 14 | 15 | 16 | 17 | 18 |

| 19 | 20 | 21 | 22 | 23 | 24 | 25 |

| 26 | 27 | 28 | 29 | 30 |

글 보관함