티스토리 뷰

Showing off studying ML/ML - academic reels

[Reels] Imagen yourself

BayesianBacteria 2024. 10. 15. 21:49Link , Personalized text-to-image generation by Meta AI

Insights

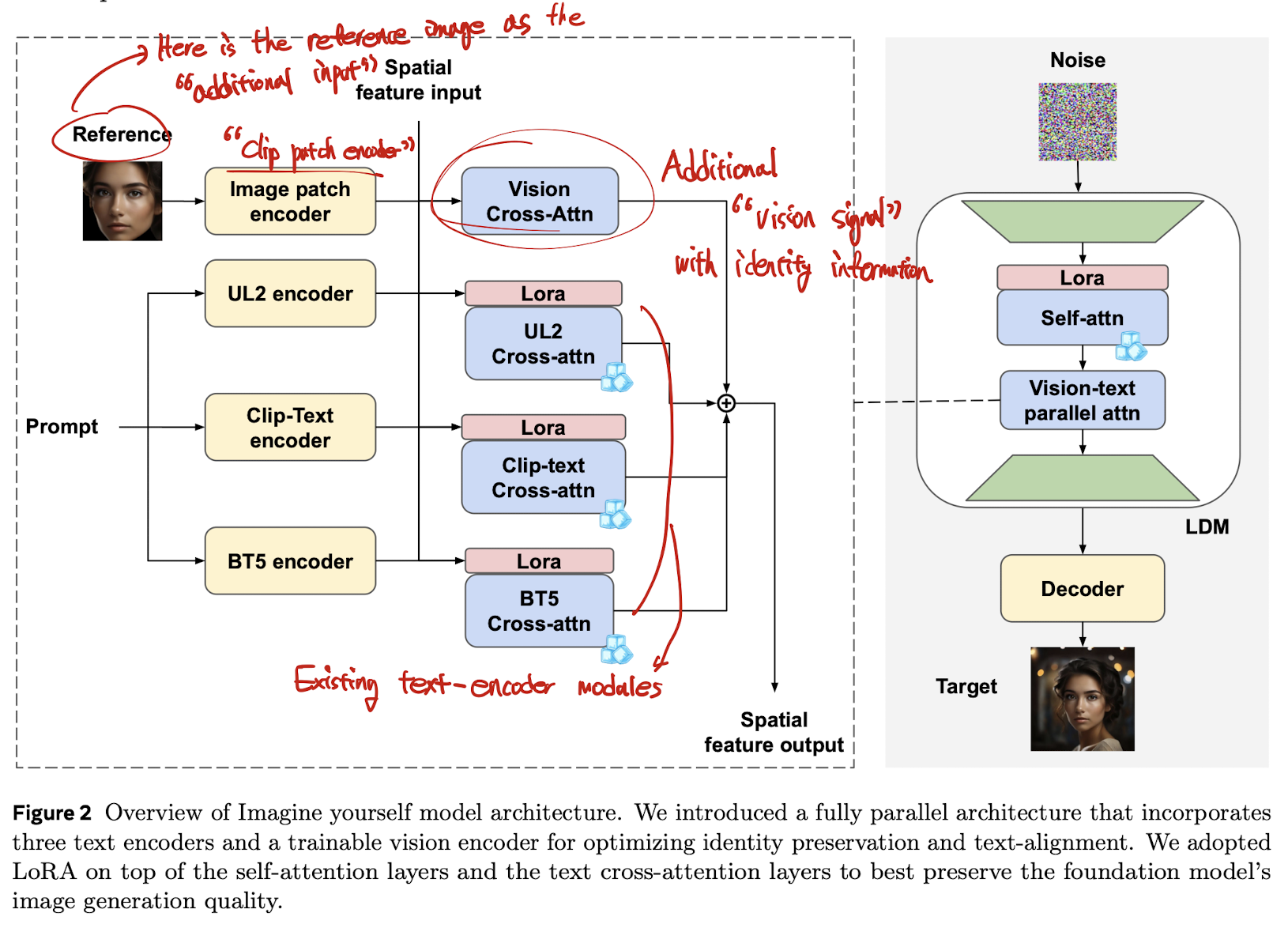

They create “architecture tailored for personalization image generation”

- they design kind of improved IP-adaptor for “any-personality” generation.

- therefore, the model does not need to be trained for a new subject, unlike LoRA or Dreambooth.

- meanwhile, other “any-personality generation models” could come with a strong over-fitting behavior such as copy-paste effect to the reference image. (it can be resolved in the synthetic pair dataset below)

Synthetic pair dataset for personalization task

- the limitation of the existing personalization task is “copy-paste” effect—the generated image looks super-similar to the given reference image.

- it means that the target generated image “does not follow” the given prompt.

- to resolve such issue, authors proposes the synthetic-data pipeline consisting of several real and synthetic data for one identity.

- sadly, the details of the pipeline is not included (such as, how to generate synthetic “personalized image”)

Rationale in their text encoders

- (common space between image and text) CLIP

- (encoding “characters”) ByT5: Byte-Level (Character-level) T5 architecture. (might improve the “text image” generation—for instance, the sign of “moreh is cool”)

- (Comprehending long and intricate text prompts) UL2: “improved T5”

Limitations

- only applicable for the models with cross-attention text conditioning.

- at least, from the proposed architecture in the paper

- to apply SD3-like architecture (w/o cross attentions), it need to be adjusted

'Showing off studying ML > ML - academic reels' 카테고리의 다른 글

공지사항

최근에 올라온 글

최근에 달린 댓글

- Total

- Today

- Yesterday

링크

TAG

- domaingeneralization

- deeplearning4science

- DeepLearning

- 몽중식

- finetuning

- LLM

- icml2024

- 프렌밀리

- generativemodeling

- flowmatching

- 이문설농탕

- ICML

- diffusion

- loss-weighting

- vscode

- multiheadattention

- MachineLearning

- Transformer

- Theme

| 일 | 월 | 화 | 수 | 목 | 금 | 토 |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | |||

| 5 | 6 | 7 | 8 | 9 | 10 | 11 |

| 12 | 13 | 14 | 15 | 16 | 17 | 18 |

| 19 | 20 | 21 | 22 | 23 | 24 | 25 |

| 26 | 27 | 28 | 29 | 30 |

글 보관함